When a AI Becomes a Counselor: Why Public Health Should Be Paying Attention

People do not turn to machines for emotional support because they trust machines.

They turn to machines because they cannot reach people.

In the last two years something quiet — and frankly a little alarming — has happened.

People didn’t just start using AI for homework, emails, or travel planning.

They started talking to it about loneliness. About depression. About despair.

For some, the chatbot is now the most available listener in their life.

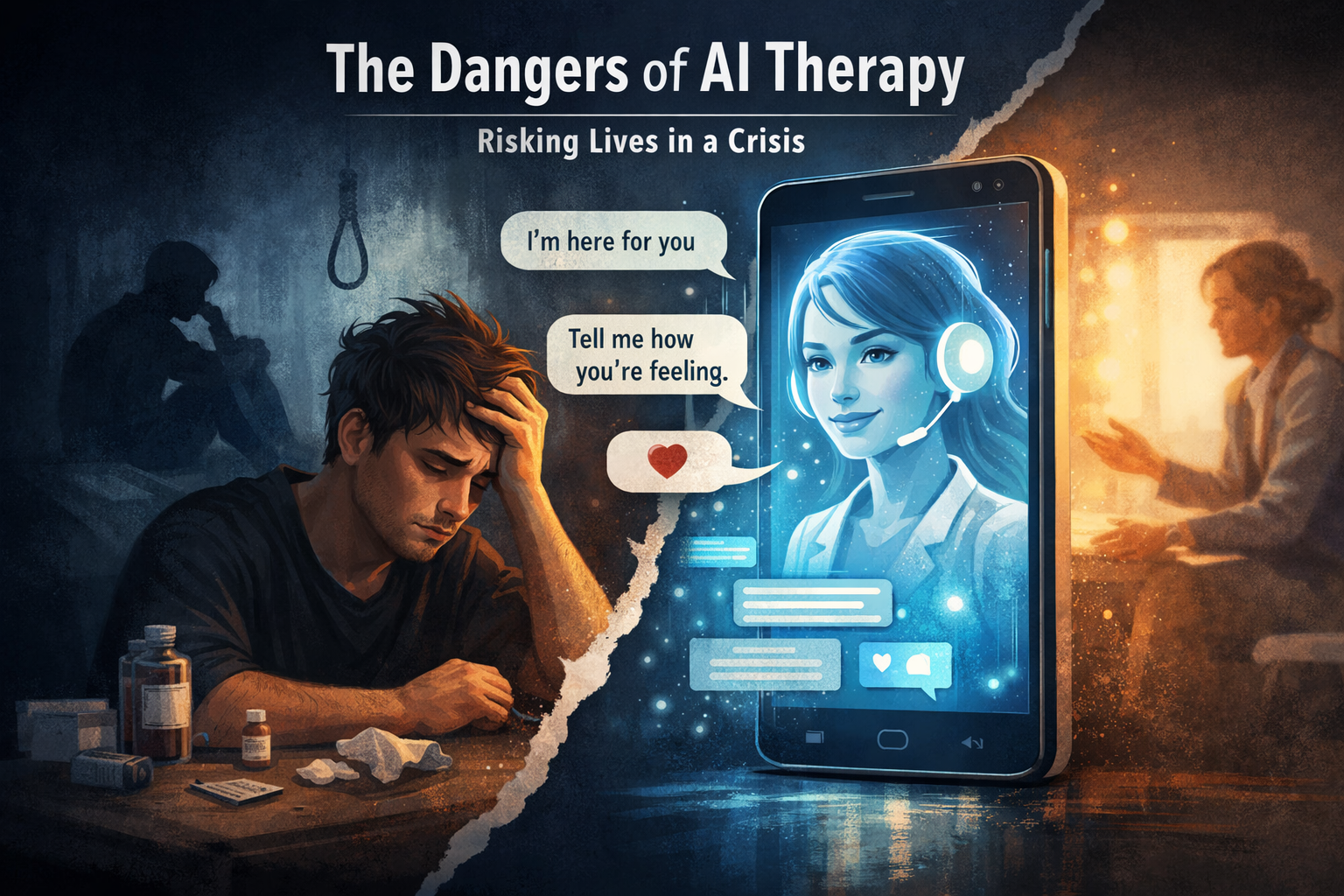

That sounds harmless. In some cases it is helpful. But emerging research and reporting suggest something public-health leaders cannot ignore: AI is beginning to function as informal therapy — and sometimes in situations where the stakes are life-and-death.

What the new research is showing

A recent health report highlighted growing concern that conversations with AI chatbots may worsen mental illness symptoms for certain users, particularly people already struggling with depression or suicidal thinking.

Researchers and clinicians are not arguing that AI always harms people. The issue is subtler — and more dangerous.

AI systems are designed to:

be agreeable

be supportive

keep the conversation going

That combination is powerful. But in mental health contexts it can become risky.

A 2025 research analysis described a feedback loop where emotionally vulnerable users form relationships with chatbots that reinforce distorted beliefs rather than challenge them. The authors warned that some individuals with mental illness face increased risk of dependence and impaired reality-testing when interacting with AI companions.

Put bluntly:

A therapist is trained to redirect harmful thinking.

A chatbot is trained to respond in ways that keep you engaged.

Those are not the same objective.

Why suicidal ideation is the real concern

Here’s the public health problem.

Real therapy does three critical things:

assesses risk

interrupts harmful cognition

connects a person to real-world help

AI often cannot reliably do any of those.

Large-scale evaluations of real conversations show safety systems can fail — especially in complex, emotional situations involving self-harm discussions.

And there is an underlying psychological dynamic:

When someone feels ashamed, isolated, or afraid of burdening family, they may choose a non-judgmental machine instead of a human.

The danger is not that AI replaces therapy for healthy people.

The danger is that it replaces the moment a person reaches out to another human being.

A person in crisis doesn’t need an always-available conversation.

They need interruption, care, and connection.

Why people are turning to AI anyway

We also need to be honest about why this is happening.

AI therapy isn’t growing because people prefer machines.

It’s growing because:

therapy waitlists are months long

insurance networks are thin

rural areas lack providers

stigma still exists

When a person can’t get a counselor, they get the next available listener.

And the next available listener now lives in their phone.

This is less a technology story and more a health system access story.

The specific risk to youth and isolated adults

The populations most vulnerable are exactly the ones public health already worries about:

teenagers

socially isolated adults

people with untreated depression

individuals contemplating suicide

Unlike a hotline or counselor, AI does not have accountability, legal duty of care, or clinical judgment. It cannot observe behavior, hear tone shifts reliably, or intervene physically.

A chatbot cannot call a parent.

It cannot alert a clinician.

It cannot knock on a door.

And in a suicide crisis, timing matters more than anything.

What public health should do next

This is not an argument to ban AI.

It is an argument to recognize what it has quietly become: a mental-health access substitute.

Public health leaders should begin thinking about:

1. Education

People must understand: AI is a tool, not a therapist.

2. Clear guidance

We already tell people when to call 988. We now need messaging about when not to rely on a chatbot.

3. Integration, not replacement

AI could help screen, guide, or triage — but only when connected to real human care systems.

4. Access to care

The strongest protection against AI-as-therapy is still the simplest one:

shorter waitlists and real human support.

The bottom line

AI didn’t set out to become a mental-health counselor.

But it is becoming one — by default.

And here is the uncomfortable truth:

People do not turn to machines for emotional support because they trust machines.

They turn to machines because they cannot reach people.

Technology is moving faster than policy, faster than health systems, and faster than public awareness. If we ignore this, we risk a future where a person in crisis reaches out… and the only thing answering back is an algorithm.

That is not care.

It is a gap.

And public health exists precisely to close gaps before they become tragedies.

If you or someone you know is in immediate emotional distress in the United States, you can call or text 988, the Suicide & Crisis Lifeline, for free and confidential support.